When a CrowdStrike alert catches a suspicious executable, the instinct is to confirm it's malicious, quarantine it, and close the ticket. But what if the file was just the last step in a chain that started weeks earlier, and that chain is still active? In this real anonymized case, Qevlar AI starts from one EDR alert and autonomously surfaces 7 new observables, tracing a coordinated phishing campaign targeting 40 employees, an active account takeover, and an attacker already inside the network.

When a CrowdStrike alert fires on a suspicious executable, the instinct is to check the file, confirm it's malicious, quarantine it, and move on. The endpoint is clean. The ticket is closed.

But what if the executable was just the last step in a chain that started weeks earlier, and that chain is still running?

This is a real anonymized case where a routine EDR detection turned out to be the visible tip of an active phishing campaign. We'll walk through exactly what enrichment would have revealed, where it would have stopped, and what autonomous investigation uncovered beyond it.

All names, devices, and company identifiers have been anonymized.

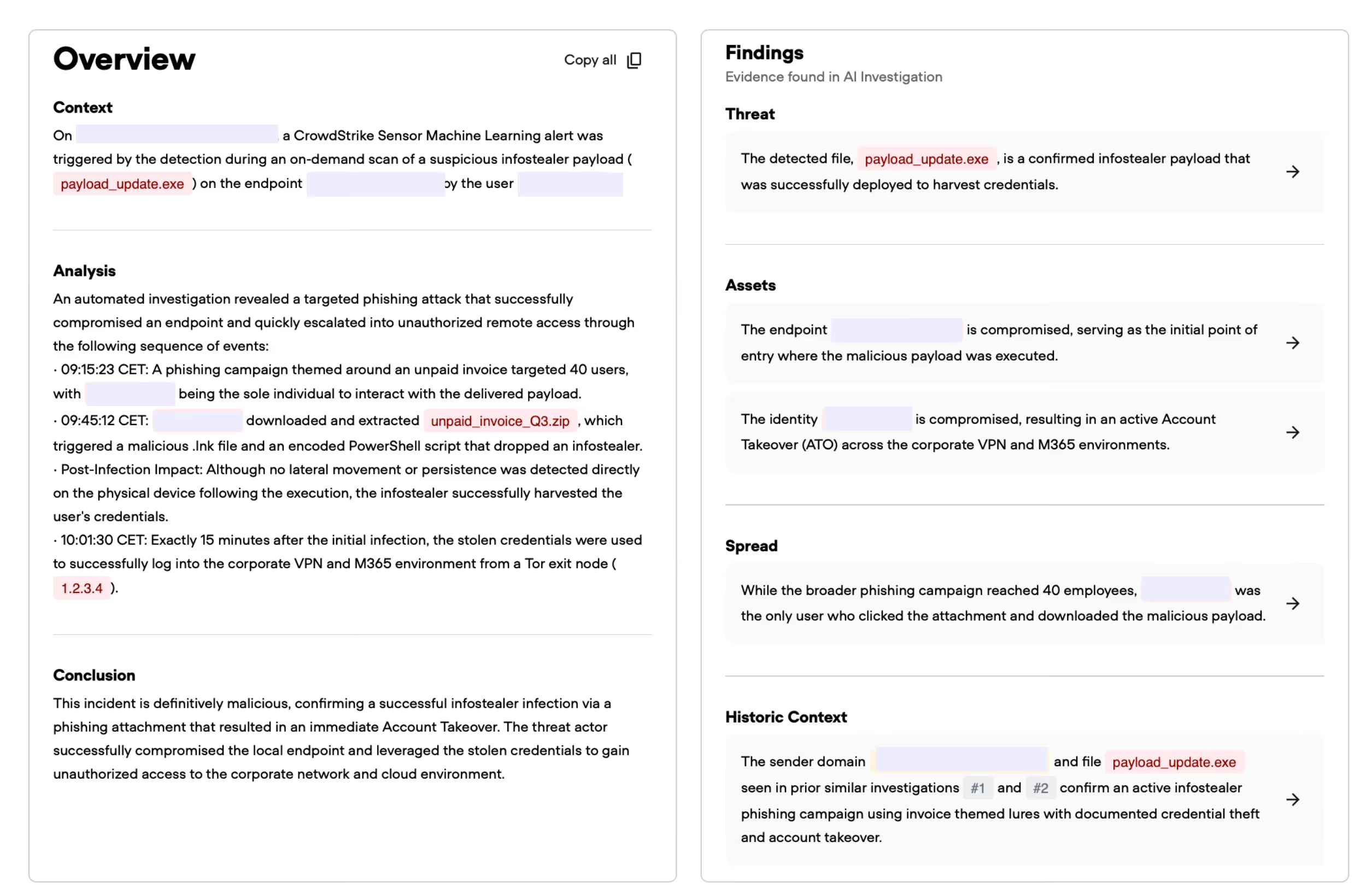

The investigation begins with a CrowdStrike Sensor Machine Learning alert: a suspicious file, payload_update.exe, was detected and quarantined on a finance department endpoint.

At first glance, this looks contained. The file was caught, and the endpoint was protected. A standard enrichment workflow would confirm exactly that and close the case.

But Qevlar AI treated this alert as a starting point.

Before showing what the investigation added, it's worth being precise about what enrichment does well here because it does quite a lot, and then look at what critical information it would miss.

On the original alert, an alert triage solution would have surfaced:

File reputation: payload_update.exe comes back malicious across multiple threat intelligence sources. VirusTotal confirms it. Sandbox identifies it as an infostealer. The file hash has been seen before.

Device profile: The affected endpoint is in the finance department: high criticality, production machine, domain-joined. Asset context is attached.

User profile: The user account associated with the endpoint is pulled from AD. Role, department, and seniority are attached to the alert.

Process context: The file was executed via a PowerShell script running with hidden window parameters, which is a known evasion technique. The parent process chain is suspicious.

Endpoint behavior: No lateral movement or persistence mechanisms detected directly on the device at the time of detection.

The enrichment verdict is clear: this is a confirmed infostealer payload, successfully deployed on a finance endpoint. Block the hash. Isolate if needed. Close the ticket.

That verdict is correct. And it is dangerously incomplete.

Here's what enrichment cannot tell you, because none of these questions are answerable from what's inside the original alert:

payload_update.exe get onto the machine in the first place?Answering these questions requires generating new hypotheses, querying systems that weren't referenced in the original alert, and following observables that don't exist yet at the time of detection. That's investigation, and that's where Qevlar AI excels.

Qevlar begins with the two observables present in the original alert: the file and the endpoint.

Step 1: File reputation

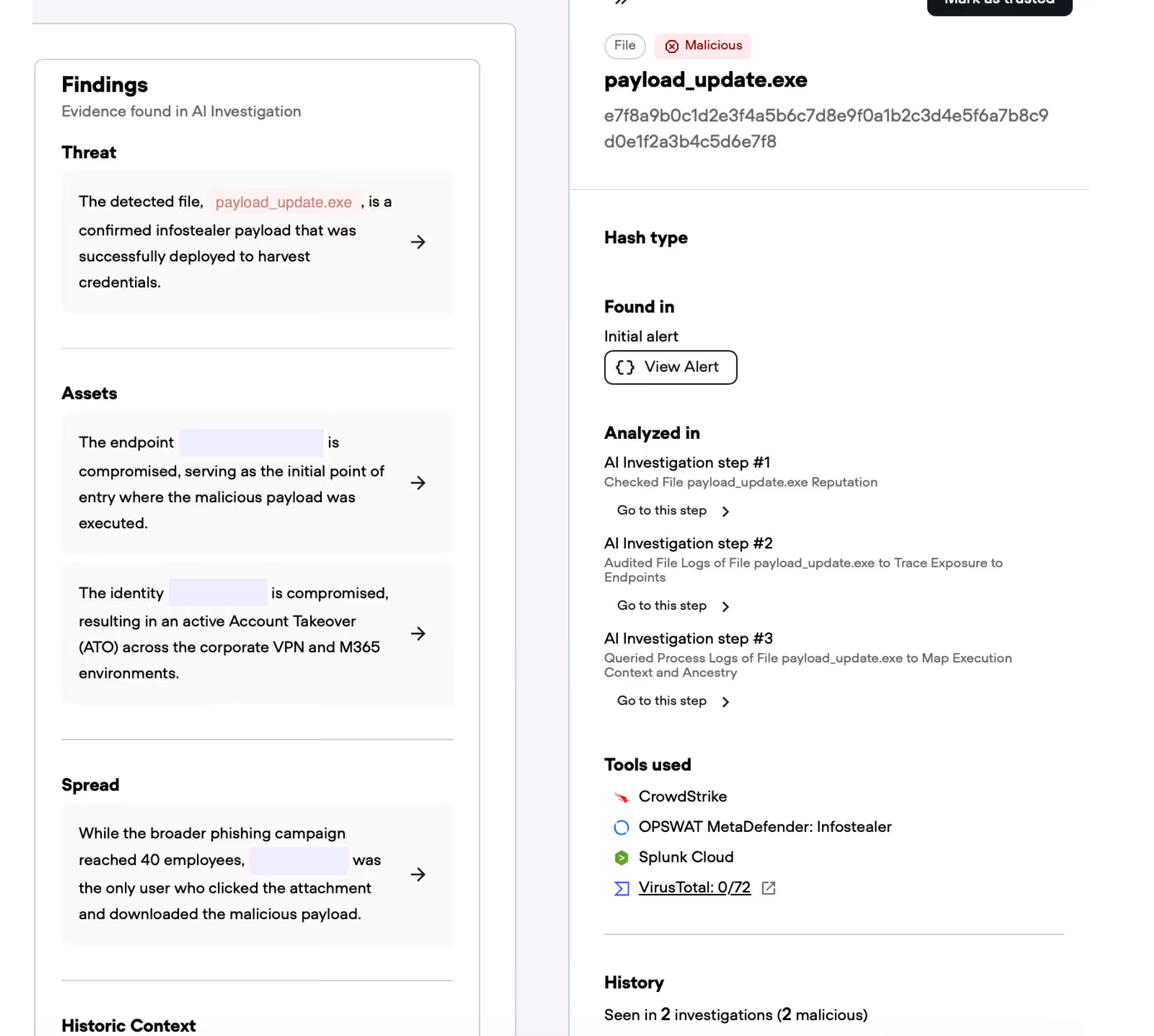

For the file, Qevlar runs CTI checks, sandboxes it, and uses its proprietary web search agent to profile it against known threat infrastructure. Notably, VirusTotal returned 0 detections at the time of analysis — the payload was unknown to most engines. Ssandbox behavior analysis is what confirmed the infostealer verdict.

Step 2: File log audit

Qevlar then queries CrowdStrike and Splunk to audit the file's logs and trace its exposure across the environment. The query confirms the file was isolated to a single endpoint, no lateral file spread detected at this stage.

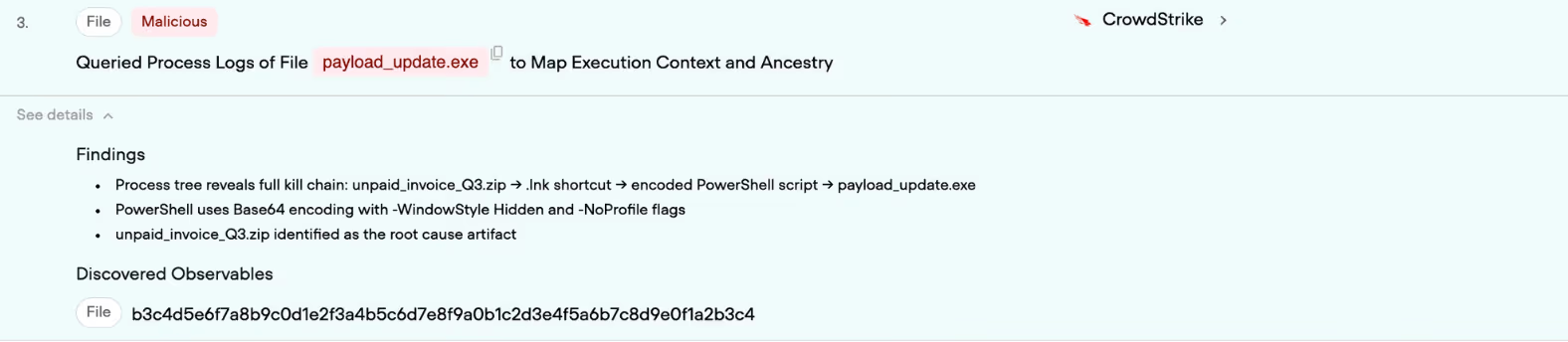

Step 3: Process log query

Qevlar queries CrowdStrike process logs to map the full execution ancestry of the file. This reveals the complete kill chain: a zip archive had been extracted, triggering a .lnk shortcut that launched an encoded PowerShell script with hidden window parameters, which then dropped the payload. This is where the zip archive surfaces as a new observable — not present in the original alert, and not something any enrichment workflow would have queried.

For the endpoint, Qevlar queries CrowdStrike across multiple log tables simultaneously — network events and process events — to map execution context and check for anomalous behavior on the device.

Process log analysis revealed the full execution ancestry: a zip archive had been extracted, triggering a .lnk shortcut that launched an encoded PowerShell script with hidden window parameters, which then dropped the payload. This is where the zip archive surfaces as a new observable.

This is where the investigation expands beyond the alert boundary. Qevlar surfaces a new observable.

Buried in the file logs is a reference to a second file: unpaid_invoice_Q3.zip. This archive was not in the original alert. It was downloaded by the user shortly before execution, and it contained the .lnk file and encoded PowerShell script that dropped the infostealer.

This is a potential root cause and a new thread to pull.

At the same step, Qevlar extracts a second new observable: the user account that was authenticated on the device during the file drop window. This identity wasn't referenced in the original alert. It becomes the pivot point for Stage 2.

With two new observables now in scope — the zip archive and the user identity — Qevlar pivots.

On the user: Qevlar queries the last 30 days of authentication logs, baselines normal activity, and immediately detects an anomaly. Approximately 15 minutes after payload execution, a successful VPN authentication is recorded from an IP address identified as a Tor exit node with a high abuse confidence score. Simultaneously, a valid Microsoft 365 session is opened from the same IP. The authentication used a "previously satisfied" token claim, strongly suggesting session cookie theft, bypassing MFA.

The user's account is actively compromised. The attacker is inside.

Before pivoting to the zip archive, Qevlar inspects the user's web and file activity in the window prior to execution. This surfaces the download event: the zip archive was retrieved via a browser approximately 2 minutes before payload execution and opened directly from the Downloads folder. This confirms the user actively interacted with the malicious attachment — and gives Qevlar the thread it needs to trace the delivery chain back to its origin.

File reputation: The zip archive is submitted for reputation analysis. VirusTotal returned only 3 detections out of 72 engines — a low score that would not trigger an alert in a standard enrichment workflow. Qevlar's proprietary TrueSignal technology flagged it as definitively malicious, identifying it as a Phishing.ZIP.InfoStealer delivery vehicle. The discrepancy between vendor consensus and TrueSignal's conviction is precisely the kind of gap that lets evasive payloads slip through.

File log audit: Qevlar audits the zip archive's file logs across CrowdStrike and Splunk to trace its exposure. The archive is traced back to an email attachment via CrowdStrike email metadata, which yields a specific message ID — a new observable that points directly to the phishing email that initiated the chain.

Process log query: A process log query on the zip confirms no execution branches beyond the already identified chain. The full kill chain is now mapped: phishing email → zip archive → .lnk shortcut → encoded PowerShell script → infostealer payload.

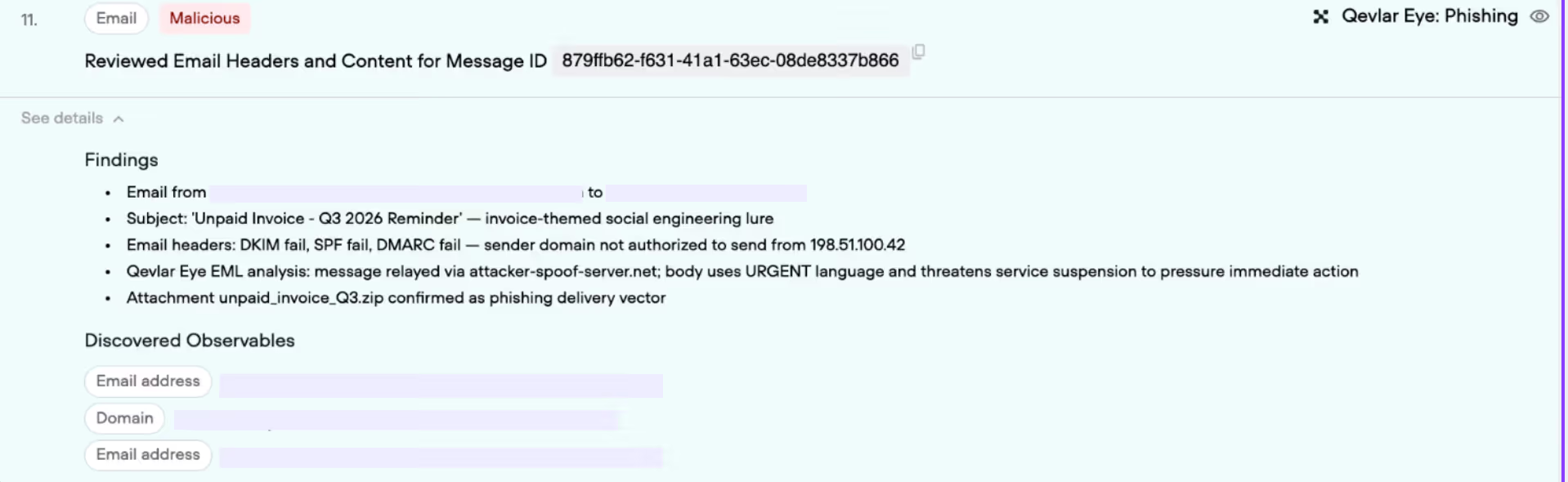

On the email: Qevlar applies QevlarEye, its proprietary semantic and visual analysis engine, to the email. The analysis is unambiguous: the email impersonates a legitimate accounting service, uses invoice-themed urgency tactics, and was sent from a domain designed to appear credible at a glance. It is a phishing email.

This single discovery reframes the entire investigation. What started as an EDR alert is now a phishing-delivered infostealer with confirmed account takeover.

But Qevlar keeps going.

On the campaign: Qevlar queries email logs across the organization to identify how many employees received emails from the same sending domain. The results surface a coordinated phishing campaign: 40 employees received emails with the same malicious attachment, sent over a period of several days.

Of those 40, only one user downloaded the attachment and executed the payload. The blast radius is assessed: one account compromised, no lateral movement detected beyond the initial VPN session, no persistence mechanisms deployed on other endpoints.

By the end of the investigation, Qevlar had extracted 7 observables that were not present in the original alert:

unpaid_invoice_Q3.zip) — the delivery mechanismThat last point matters. Historic context confirmed this wasn't a one-off. The same infrastructure had been running a campaign for weeks, using invoice-themed lures across multiple targets. The current investigation was the third time this actor had touched the organization.

Starting from a single EDR alert — a file detected and quarantined on one endpoint — Qevlar's autonomous investigation produced a complete attack chain:

A phishing campaign targeting 40 employees delivered a malicious invoice attachment. One employee opened it, executed the payload, and had their credentials stolen by an infostealer. The attacker used those credentials to authenticate via Tor into both the corporate VPN and Microsoft 365 environment, bypassing MFA through session cookie theft. The compromise was active at the time of the investigation.

Enrichment confirmed the file was malicious. Investigation revealed the campaign was ongoing.

Enrichment is a necessary first step. It tells you what is known about what's already in the alert: quickly, automatically, and consistently. In this case, it correctly identified a malicious file on a compromised endpoint.

But enrichment operates within a predefined scope. It annotates what it was given. It cannot ask what happened before the alert fired, who else was affected, or whether the attacker is still present.

Investigation does. It starts from what the alert implies, generates new questions, discovers new observables, and follows the chain until the full picture is visible.

In this case, the difference between enrichment and investigation was the difference between a closed ticket and a contained campaign.